If you're new here, you may want to subscribe to my RSS feed. Thanks for visiting!

Author of Be Ready for Anything and Build a Better Pantry on a Budget online course

After the 2016 presidential election, accusations of foreign interference ran rampant. This, paired with President Trump’s surprise victory, caused social media outlets to crack down on “fake news.” And now, one major social media outlet has caused outrage among employees with a policy that allows paid advertisements by politicians to be completely exempt from “fact-checking.”

But before we get into that, let’s take a moment to look back at previous discussions of election interference.

Did the Russians change the outcome of the 2016 election?

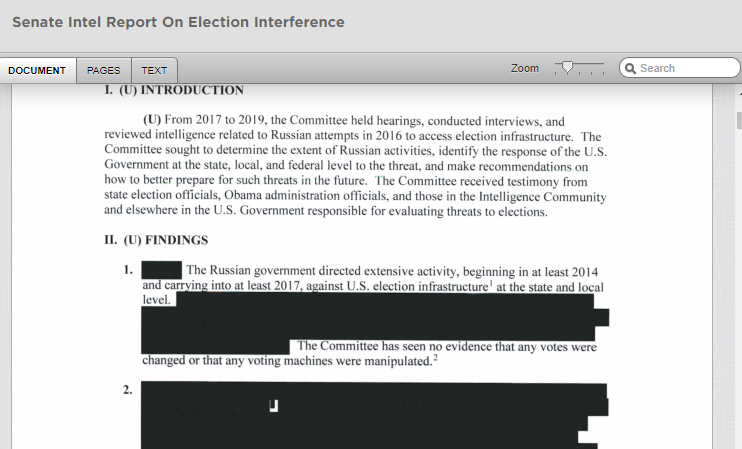

A hearing in the Senate lasting for 2 years found that the “Russian government directed extensive activity…against the US election infrastructure.” However, the redacted report also says that the Senate Committee found “no evidence that any votes were changed or any voting machines were manipulated.” You can read the full (redacted) report here.

Despite the fact that this doesn’t seem to have had any effect on the outcome of the last election, the mainstream media still to this day claims that Russia is the cause of all our problems. Here’s one example from May of 2019:

AS LAWMAKERS, STATE elections officials and social media executives work to limit intervention in the 2020 elections by Russia and other foreign operatives, an unsettling truth is emerging.

Vladimir Putin may already be succeeding.

The troubling disclosures of Russian meddling in the 2016 campaign – “sweeping and systematic,” special counsel Robert Mueller concluded in his report on the matter – have policymakers on guard for what intelligence officials say is a continuing campaign by Russia to influence American elections. But even if voting machines in all jurisdictions are secured against hacking and social media sites are scrubbed of fake stories posted by Russian bots, the damage may already have been done, experts warn, as Americans’ faith in the credibility of the nation’s elections falters.

“This is Vladimir Putin’s game plan – sow distrust, discord, disillusionment and division,” Sen. Richard Blumenthal, Democrat of Connecticut, says about the Russian leader. “It’s his playbook for all Western democracies – not just us, but Europe and around the world. We’re open societies, we’re vulnerable to disinformation, and he regards himself as superior because he controls the press,” adds Blumenthal, one of the authors of bipartisan legislation meant to improve election security. (source)

Alternative news sites began to be targeted.

Right after the election, alternative news sites began to be targeted by the mainstream media.

Who can forget the outrageous and unfounded accusations reported by Washington Post which were leveled against bloggers and alternative news websites, accusing them of either being Russian operatives or in cahoots with the Russians?

That’s when the term “fake news” began to be used as a weapon. Now, any time someone on the internet sees a report that doesn’t align with their cognitive bias, they exclaim “Fake news!!!” regardless of the accuracy of the report. Let’s face it, “fake news” could just be used interchangeably with “stuff someone doesn’t like.”

And the targeting didn’t stop with the mainstream media.

Facebook, YouTube, and Twitter began last year to purge their networks of some of the most popular alternative news sites around, wiping out tens of millions of followers overnight. The life’s work of people who wanted others to have an alternative view of politics and the way our country interacts with the world vanished at the snap of a finger.

Facebook has vowed to fight “fake news”

Facebook has allotted a great deal of money to fighting “fake news” during the next election.

With just over a year left until the 2020 U.S. presidential elections, Facebook updated its policies on the spread of misinformation and released a bunch of new tools to better “protect the democratic process” in a post published yesterday. Now, Facebook will clearly label false posts and state-controlled media, and will invest $2 million in media literacy projects to help people understand the information they’re seeing online…

…Over the next month, content published on Facebook and Instagram that has been rated false or partly false by a third-party fact-checker will be more prominently labeled so people can better decide for themselves what to read, trust, and share. A pop-up will also appear when users attempt to share posts on Instagram that include content that’s been debunked by its fact-checkers…

…Facebook revealed it has removed four networks found to be fake, state-backed misinformation-spreading accounts based in Iran and Russia — countries that have recently been found to cross borders to spread misinformation on not just their in-apps but on a global scale too.

Alongside these updates to protect voters in the states, the tech giant introduced a security tool for elected officials and candidates that monitors their accounts to detect hacking such as login attempts in unusual locations or on unverified devices. (source)

So this means if you share something that Facebook’s “independent” fact-checkers, don’t like, it will be labeled as “false” when you post it. For more information on who Facebook’s fact-checkers are, check out this article: people who aren’t even from the United States are vetting American news. As well, the Columbia Journalism Review has a number of criticisms about the program.

And this leads us to today’s lead story.

Facebook employees are outraged about a policy for political ads.

While alternative media sites and dissenting views are being moderated into oblivion, at the same time, Facebook is not moderating political ads at all. Politicians can advertise something entirely untrue about their opponents, their pasts, and basically anything else they want to, with no oversight whatsoever.

Here’s an open letter that employees have posted, publicly criticizing the wildly dishonest policy.

We are proud to work here.

Facebook stands for people expressing their voice. Creating a place where we can debate, share different opinions, and express our views is what makes our app and technologies meaningful for people all over the world.

We are proud to work for a place that enables that expression, and we believe it is imperative to evolve as societies change. As Chris Cox said, “We know the effects of social media are not neutral, and its history has not yet been written.”

This is our company.

We’re reaching out to you, the leaders of this company, because we’re worried we’re on track to undo the great strides our product teams have made in integrity over the last two years. We work here because we care, because we know that even our smallest choices impact communities at an astounding scale. We want to raise our concerns before it’s too late.

Free speech and paid speech are not the same thing.

Misinformation affects us all. Our current policies on fact checking people in political office, or those running for office, are a threat to what FB stands for. We strongly object to this policy as it stands. It doesn’t protect voices, but instead allows politicians to weaponize our platform by targeting people who believe that content posted by political figures is trustworthy.

Allowing paid civic misinformation to run on the platform in its current state has the potential to:

— Increase distrust in our platform by allowing similar paid and organic content to sit side-by-side — some with third-party fact-checking and some without. Additionally, it communicates that we are OK profiting from deliberate misinformation campaigns by those in or seeking positions of power.

— Undo integrity product work. Currently, integrity teams are working hard to give users more context on the content they see, demote violating content, and more. For the Election 2020 Lockdown, these teams made hard choices on what to support and what not to support, and this policy will undo much of that work by undermining trust in the platform. And after the 2020 Lockdown, this policy has the potential to continue to cause harm in coming elections around the world.

Proposals for improvement

Our goal is to bring awareness to our leadership that a large part of the employee body does not agree with this policy. We want to work with our leadership to develop better solutions that both protect our business and the people who use our products. We know this work is nuanced, but there are many things we can do short of eliminating political ads altogether.

These suggestions are all focused on ad-related content, not organic.

1. Hold political ads to the same standard as other ads.

a. Misinformation shared by political advertisers has an outsized detrimental impact on our community. We should not accept money for political ads without applying the standards that our other ads have to follow.

2. Stronger visual design treatment for political ads.

a. People have trouble distinguishing political ads from organic posts. We should apply a stronger design treatment to political ads that makes it easier for people to establish context.

3. Restrict targeting for political ads.

a. Currently, politicians and political campaigns can use our advanced targeting tools, such as Custom Audiences. It is common for political advertisers to upload voter rolls (which are publicly available in order to reach voters) and then use behavioral tracking tools (such as the FB pixel) and ad engagement to refine ads further. The risk with allowing this is that it’s hard for people in the electorate to participate in the “public scrutiny” that we’re saying comes along with political speech. These ads are often so micro-targeted that the conversations on our platforms are much more siloed than on other platforms. Currently we restrict targeting for housing and education and credit verticals due to a history of discrimination. We should extend similar restrictions to political advertising.

4. Broader observance of the election silence periods

a. Observe election silence in compliance with local laws and regulations. Explore a self-imposed election silence for all elections around the world to act in good faith and as good citizens.

5. Spend caps for individual politicians, regardless of source

a. FB has stated that one of the benefits of running political ads is to help more voices get heard. However, high-profile politicians can out-spend new voices and drown out the competition. To solve for this, if you have a PAC and a politician both running ads, there would be a limit that would apply to both together, rather than to each advertiser individually.

6. Clearer policies for political ads

a. If FB does not change the policies for political ads, we need to update the way they are displayed. For consumers and advertisers, it’s not immediately clear that political ads are exempt from the fact-checking that other ads go through. It should be easily understood by anyone that our advertising policies about misinformation don’t apply to original political content or ads, especially since political misinformation is more destructive than other types of misinformation.

Therefore, the section of the policies should be moved from “prohibited content” (which is not allowed at all) to “restricted content” (which is allowed with restrictions).

We want to have this conversation in an open dialog because we want to see actual change.

We are proud of the work that the integrity teams have done, and we don’t want to see that undermined by policy. Over the coming months, we’ll continue this conversation, and we look forward to working towards solutions together.

This is still our company. (source)

So to be clear:

- Facebook does NOT fact check political advertisements.

- Facebook does NOT make it abundantly clear that readers are seeing ads and not articles.

- The candidate dumping in the most money will get the most visibility.

Merriam-Webster defines propaganda as:

Here’s why this is such a huge problem.

A lot of people think that because they, personally, do not use social media, that what happens on social media does not affect them. But Facebook reaches the minds of 1.59 billion people per day. Monthly, that number leaps to 2.41 billion people. And 4.75 billion pieces of content (posts) appear on Facebook per day.

Obviously, those are not all American users. About 70% of Americans use Facebook, and out of those, 74% log in daily. (source)

That’s an awful lot of people who can be influenced by a company that has a policy of silencing alternative voices and promoting politicians whether they’re telling the truth or not.

So it’s pretty obvious who will be interfering in the 2020 election.

And it’s not the Russians.

About Daisy

Daisy Luther is a coffee-swigging, globe-trotting blogger who writes about current events, preparedness, frugality, voluntaryism, and the pursuit of liberty on her website, The Organic Prepper. She is widely republished across alternative media and she curates all the most important news links on her aggregate site, PreppersDailyNews.com. Daisy is the best-selling author of 4 books and runs a small digital publishing company. You can find her on Facebook, Pinterest, and Twitter.

Folks,

As a computer and communications professional with over 45 years in the industry, (the past 19 years performing computer/network intrusion detection and prevention). My biggest concern is not “foreign interference” in an election, but rather our own incompetence in conducting an election. We have collectively rushed to embrace electronic voting, but ignored some serious consequences There are 3 companies whose products are used in about 90% of the voting locales. I’ve looked into the situation and here are some of my findings, which nationwide, create a “perfect storm” –

1 – Outdated equipment that was never built with robust security in mind (20+ years old).

2 – Obsolete software (running Windows 7 and vendors saying “we cannot upgrade or patch”). ((I think this is lame – they just want to sell new hardware for greater profits))

3 – Proprietary software where the vendors have been (repeatedly) caught lying about capabilities or lack thereof.

4 – Frequently no printed record of ballot is generated. (“Printers cost too much” is the excuse I’ve heard too often).

5 – Physical security issues – One vendor in particular had daisy chained serial (old school RS 232) devices, probably not using tamper evident tape on connectors. You can buy the silly tape at Staples, big quantities from Uline and other vendors. Search for “security tape” and/or “security labels”.

6 – If wireless connected, how well secured? (How the vendor implements wireless security needs expert scrutiny to prevent a “man in the middle” attack).

7 – Chain of custody over transport of memory devices (SIM cards, USB sticks or other electronic media).

8 – Chain of custody over storage of memory devices between elections (SIM cards, USB sticks or other electronic media ).

9 – Chain of custody of devices used for polling, both in transport and storage. How well are they secured between elections?

10 – Poorly trained staff at polling places (many are retirees with negligible computer skills).

11- Very poor or no “certification standards” are applied.

12 – Few or no cybersecurity specialists at state Attorney General’s offices.

13 – No money for outside technical consultants.

14 – No money to replace equipment.

I have more to add, but this ought to give you an idea how awful the situation has become.

A more in-depth source:

https://www.verifiedvoting.org/

Watch the video “How I Hacked…”

and follow the links from the main page to some real world horror stories.

How many of those problems are deliberate, in order to commit voter fraud?

Richard,

What I have seen is ineptitude and ignorance. No forensic evidence has crossed my desk to indicate deliberate manipulation by the vendor(s). The certification standards, such as they are, when applied, will show that no votes are manipulated by the devices (sorry about the caps, no italics available) AS DELIVERED BY THE VENDOR. What happens to the machines in storage, and the data in transit is where the problems arise. The problems are compounded by the voting authorities having no background in computer and communications security. Budget constraints limit what they can do to be proactive. By order of magnitude there are around 10,000 voting jurisdictions (counties, cities, other municipalities, tribal lands, etc.) across the US. Most are beholden to their state attorney general’s office for how to implement an election. AG’s offices tend to be staffed by lawyers, not computer people equipped to detect and prevent the bad things that can happen.

Can an outcome be rigged? Yes. On a large scale, not easy. I recall growing up in the Chicago area for the 1960 presidential election. Some precincts reported more votes for Kennedy than there were people (adults and kids combined) in the precinct. They didn’t need to spoof a poorly designed and implemented computer “e-vote” system to pull that off. Just corrupt people. Please revisit my “chain of custody” comments above.

Social media, and MSM was a bigger interference in the 2016 election.

And they will be in the 2020 election.

They will blame outside interference if Trump wins.

I think the majority of Americans have already made up their minds who they will vote for in 2020.

A great article that speaks to many of my concerns, to which I will add one of my own. I believe that the crack down on independent thinking, the ghosting of free thinking websites, the rise of masked Bolshevik goon squads along with their snowflake “sky screaming” allies, the cancer like growth of the surveillance state, the militarization of the police (posse comitatus? We don’t need no stinkin’ posse comitatus!), the Big Tech push for conformity: ALL of these evils were already in the works, and would have been 1000x worse if Hillary had been crowned Queen of the Universe. The reason that the would be tyrants are so ANGRY ALL THE TIME is that we woke up to their lies, voted our conscience and are literally sticking to our guns. That ghoulish howling you’re hearing from them 24/7 is the frustrated wailing of hate filled bigots who were hoping to finish the “fundamental transformation of America”. They still thirst to change America from a free nation of free men and women to a socialist “workers paradise” populated by an ant mound of obedient conformists.

Beware, my fellow Americans: their hate will keep building until we have utterly defeated them, or they have killed or enslaved every last one of us.

This is a great article as always Daisy!

Saw some hearings on CPSAN where social media was being quizzed by a congressperson about their company “fact checking” political ads…does the alphabet media “fact check” the ads they allow. Somehow I think not.

As a resident of one of those cities where dead people vote, illegal aliens vote, ballot stuffing happens, and other interesting voter practices, I hardly think that a few targeted ads make much of a difference. I think that good old political shenanigans are a far greater threat to vote honesty than “fake media”. Furthermore, it seems to be that one party is home to these voter funnies. Most of big tech and “mainstream news” is allied to that one party.

Your denial of Russian interference in the 2016 election is a farce. The Investigation clearly showed they were involved and attempted to influence the outcome. Your comments about Facebook aren’t off, but all you are doing, bluntly, is lying when you say the Russians were not involved. That’s been proven.

What I said, bluntly and literally, is that they didn’t influence the outcome of the election. And if you don’t think the United States strives to put a useful leader in office in other countries too, you might want to look into that. All foreign powers how the leaders of other major powers will be favorable to them. As well, I personally knew many of the bloggers accused of being Russian assets – had dinner at their homes, went to their kids’ sporting events, was in a wedding party, etc.

NPR was/is pushing the Russian interference narrative pretty hard. As if even being exposed to a Facebook ad, a voter would lose all self control, and independent thought.

They are the same group who believes if you read some wackos manifesto, the manifesto has the power to turn you into the same wacko.

IF anyone is to be accused of interfering with a fair and open democratic proceedings of nominating a candidate, it is the Establishment DNC: Gabbard stokes fears among Democrats, https://thehill.com/homenews/house/468167-gabbard-stokes-fears-among-democrats

The GOP is just as much to blame for shutting down GOP primaries.